Investigate

Introduction

This document details how an analyst should conduct investigations and triage in the normal duties of their job. It will describe concepts and logic that enable analysts to look at data, ask investigative questions, evaluate those questions and arrive at assertions about what they have found.

An Alert

For the most part, analysts begin their investigative work when an alert is generated. Alerts are fantastic because they are a statement that something has happened and something needs to be done about it. Typically alerts are filled with information that an analyst can then use in their investigation. Alerts are generated by detections and these detections can come in many shapes and sizes.

Some just look for a particular action like someone unlocking their car door, others might have access to more context like someone who isn’t the owner of the car unlocking the door. Going even further some detections might look at how the car door was opened or at what time of day it happened. Further, some detections monitor all these little data points and paint a picture of what it would expect someone getting into their car to look like (These are called baselines). Ultimately a detection does not have to be an alert because they can be abstracted together over time or when a certain condition is met and then turned into an alert.

It’s really important to understand what type of alert your looking at (not what the alert is, we aren’t there yet) as how it was generated decides what your investigative questions look like and the distance between performing analysis and taking action on the alert (very scary alert = very quick response). Particularly you also need to understand what your alert tooling can and can’t give you because the less capability you have the shorter the distance to you making a decision.

Picking what's important

Imagine a conveyor belt and you have a shopping basket and its your job to fill that basket with things that look scary or interesting, on that conveyor belt comes along 4 failed user authentication request. well ok that might interesting but we wouldn’t want that in our basket just yet because we see those on the conveyor belt all the time, but you pick it up and the label says its this really important person you know say maybe the head of finance, that’s a bit different to the usual failed authentication request and causes us some more worry so we put it in our basket.

Next on the conveyor belt is an application connecting to the internet, so you take a look and recognise its an application your company always gets on the conveyor belt and with a quick check of the address for the network connection it all matches up so you don’t bother adding it to your basket. Then comes along a word document that invoked a script interpreter, now we know sometimes this can happen especially in teams and departments that do a lot of data processing because it can speed things up but we also know script interpreters and productivity apps are a really common adversary technique so we make sure to put that in our basket for deeper analysis later.

With this process in mind, it is clear there are a few things any analyst needs to be an expert in, first and foremost what adversary procedures exist and how their techniques manifest in our systems (https://www.goblinloot.net/2023/02/writing-detections-when-stuck-with-edr.html). This is an area of study you can never stop as you are literally competing against other humans who are trying to make sure they don’t land in your basket. The MITRE Attack Framework which captures how adversaries are going to be compromising our clients https://attack.mitre.org/groups/ is a great introduction but you must also be keeping up with threat reports and news. You can use the following list to achieve this https://github.com/QueenSquishy/Zombie/blob/main/Reading/Daily. Luckily in our team, we also have the ability to recreate actual adversarial behaviour and perceive how it would appear in our telemetry and tooling. An interesting observation is that the volume and diversity in which ways adversaries will do this grows quite slowly because they simply take the path of least resistance. Additionally, a more recent trend is adversaries decreasing the sophistication of their attacks as relying on human nature lends to much more gain for much less effort.

For an analyst, this decrease in sophistication means we need to spend a lot more time baselining behaviour and capturing data points related to identities rather than reverse engineering malware or writing signatures, it’s now a shared perception in all teams that identity is the new endpoint and the criticality we treat our detection pipelines and investigations for identity-related alerts must also rise accordingly.

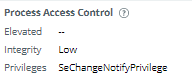

Next, an analyst needs to understand what is their capability to ask questions:

- How do you establish if the file is unique?

- How do you see what other behaviour the user was doing?

- How far back can we look?

- What asset is involved?

- Do we need to look at other assets?

- Are there other detections that can help build a better picture

- How would the behaviour look if it was an adversary?

- How would the behaviour look if it was a legit administrator?

There are countless questions like this and as analysts, you need to know how to find the answers, but importantly not just find the answer but confirm what you found hasn’t been manipulated or subject to bias. If there are questions you don’t know how to answer you need to discover how.

On the topic of bias, we are going to cover a few. First and foremost humans love shortcuts if you give them an idea (which an alert will always do) they will be drawn to chase and confirm that idea is true ignoring variables and other legitimate data that might outright disprove or lower the quality of that idea. Being actively conscious of this is really important because going back to the very beginning we need to always consider the distance between analysis and action.

So how can we take control of this bias, well we stick with what we know for certain first, if we triage a brute force alert we know:

- We are looking for large volumes of events

- Authentication methods may differ

- The source of the authentication request may be new

Forcefully covering bases we know will validate an idea will then give us the foundation to be more explorative and ask those riskier questions like:

- Is the source already compromised?

- Could it be a novel technique?

- Is it a misconfiguration issue

The next bias we are going to cover is spotlight bias, this is the concept of what you can or most easily see is the only place you look. This leads to incomplete investigations and can be defeated with a creative mindset. As an example if you are trying to identify when a user first accessed a system and you check for EventID 4624 and it is not present does that mean no one accessed the system? Of course not so let’s widen our spotlight and look at things like

- Can we see file event activity suggesting the user profile is being built?

- are there any scheduled tasks set to execute on logon that ran recently?

- Users browse the internet almost always, can we see browser activity?

Importantly what makes spotlight bias so dangerous is sometimes you cant control where the spotlight lands and it can be difficult to move but as an analyst, you should have both an expansive skill and tool set to help mitigate this.

Next is novelty bias, this one is really simple because humans love fancy shiny new things, this new threat or tool will almost always hold zero relevance to you no matter how cool it is to look at. Of course, you need to make your own assessment on this but do not expend energy on something simply because it is new. Brilliance in the basics always comes first.

Finally, we are going to cover familiarity bias as its one that will strike every analyst and its a primary component to the alert fatigue we all know and love. This bias manifests because as detections were described earlier security technology doesn’t know when something is evil just when it could be so your effectively going to be looking at a lot of “could be” all the time. There are a few things you will always need to be forcefully conscious of to tackle this

- Are you investigating less the more you see the alert?

- Do your notes usually look the same across the same alert type?

- Adversary techniques change, have your investigative questions?

- Your technology changes, has your investigation expanded appropriately too?

Never ever is there an alert that does not warrant a complete investigation, however, there are some important stipulations.

- It’s the analysts responsibility to escalate and advocate for issues with the detection

- Analysts work the alerts all day, they must be actively driving the investigative AND triage process quality

- A communication channel between analysts and engineers must exist

- Engineers must reduce the investigative load on analysts as much as possible

- If a computer CAN do it then a computer SHOULD do it. Keep toil as close to zero as possible

- Engineers are the innovators for analysts, they must be building new tools, visualisations and case management improvements.

- Detection pipelines should balance recall and precision, not analysts’ brains.

Summary

The depth of this subject is incredible and if you’re not constantly chasing it, if you’re not constantly looking to improve you will fail. Investigating and analysis is an entire field that very few people ever really study, keep learning and keep stopping adversaries.