Attack Simulations for your SOC

Introduction

SOC analysts spend 99 percent of their time looking at the same data and patterns over and over again. They develop muscle memory that helps them use their platforms without much effort but also in grains in them potentially poor practices. Most analysts are new to the industry and are taking certifications like CompTIA Cysa+ and Azure SC200 but none of these certifications teach them what genuine malicious actions look like. So how do you train a team of people how to detect malicious activity and respond in the ever-morphing threat landscape? You throw them through the ring of fire. You make them triage real (as close as possible) incidents and appropriately monitor their behavior and progress to tune the processes perpetually.

Analysts are arguably the single most important role within a SOC, they carry the burden of triaging alerts and correctly identifying whether malicious activity is occurring. They are the first, middle and last line of defense. However, despite this fact being very true there is an unfortunate reality. Analysts are considered beginner roles which leads to a lot of them simply being unfit to meet their objectives. This in turn places a requirement on leadership to actively and rigorously invest in their personal/professional development. Any decent leader should welcome this challenge head on and if you're an analyst who feels like that isn't happening then you have bad leadership.

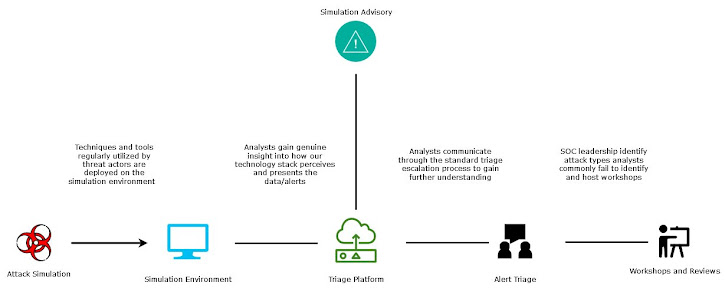

Attack Simulations

Attack simulations are the execution and use of tools, techniques and procedures commonly deployed by genuine adversaries to generate telemetry, data and often alerts so that certain questions can be asked such as: Am I protected by X control, Will the analyst identify the activity as malicious, Will the activity be captured in my telemetry etc. The complexity used in simulations can vary a lot and simulations are often confused for emulations. When running a simulation there are a few rules or objectives you want to keep too:

- Plan your simulation with the purpose in mind. Simulations to train analysts look very different (a lot quieter and more specific) to simulations that test tools.

- Unless you're retesting something that failed keep them unique from each other

- Try to explore multiple steps in the kill chain so that a bigger picture than just one operation can be made.

- Rigorously record details about your simulation. Maintain items such as what and where the simulation was run, what are the associated entities and the associated timeframe

- Maintain a database of simulations and their outcomes so you can use historic data to guide future simulations and increase their value.

The above points are important for conducting high-quality simulations, but you should also consider your target audience. If you're just trying to train a new starter don't push them too much.

Inside a SOC

Metrics

- Rate of successful identification

- Time to triage

- Types of attacks simulated

Preparedness

Technology

The technology used when conducting simulations really depends on the skills available. You can use a collection of custom scripts or manual instructions, utilize open-source tooling, or deploy paid toolsets. So long as you can achieve the objectives and rules set out above the technology used does not matter much. If you're looking to test analysts, the three main things your technology needs to achieve are

- Data, telemetry and alerts generated by the simulations are indistinguishable from genuine incidents.

- Each simulation is unique from the next, so analysts don't pick up on commonalities and instantly regard it as a simulation.

- Ensure your simulations reflect genuine activity observed in the wild.